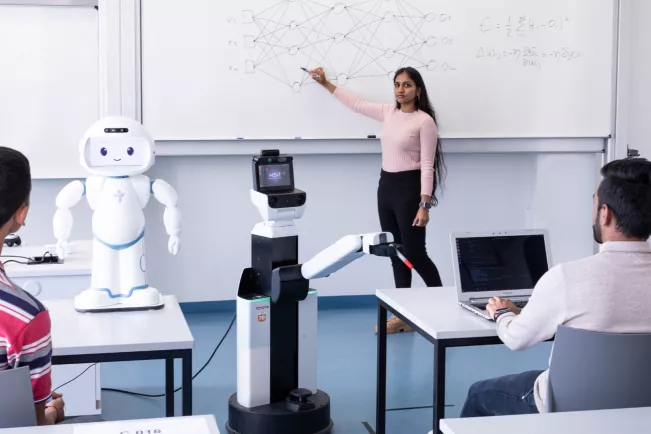

Institute for Artificial Intelligence and Autonomous Systems (A2S)

Advanced Topics in AI and Robotics

Date

Monday, 26 June 2023

Time

17:00 - 18:30

Online event

Webex

Abstract

Dynamic uncontrolled human–robot interactions (HRIs) require robots to be able to adapt to changes in the human’s behavior and intentions. Among relevant signals, non-verbal cues such as the human’s gaze can provide the robot with important information about the human’s current engagement in the task, and whether the robot should continue its current behavior or not. However, robot reinforcement learning (RL) abilities to adapt to these nonverbal cues are still underdeveloped. We proposed an active exploration algorithm for RL during HRI where the reward function is the weighted sum of the human’s current engagement and variations of this engagement. We used a parameterized action space where a meta-learning algorithm is applied to simultaneously tune the exploration in discrete action space (e.g., moving an object) and in the space of continuous characteristics of movement (e.g., velocity, direction, strength, and expressivity). In this talk, I will first show that this algorithm reaches state-of-the-art performance in the nonstationary multiarmed bandit paradigm. I will then show application to a simulated HRI task, and show that it outperforms continuous parameterized RL with either passive or active exploration based on different existing methods. I will finally show tests of the performance in a more realistic version of the same HRI task, where a practical approach is followed to estimate human engagement through visual cues of the head pose. The algorithm can detect and adapt to perturbations in human engagement with different durations. Altogether, these results suggest a novel efficient and robust framework for robot learning during dynamic HRI scenarios.

Short Bio

Mehdi Khamassi is a research director employed by the Centre National de la Recherche Scientifique (CNRS), and working at the Institute of Intelligent Systems and Robotics (ISIR), on the campus of Sorbonne Université, Paris, France. He has a double background in Computer Science (Engineering diploma in 2003 from Ecole Nationale Supérieure d'Informatique pour l'Industrie et l'Entreprise, Evry with specialization in Artificial Intelligence and Statistical Modeling) and Cognitive Sciences (Cogmaster in 2003 from Université Pierre et Marie Curie (UPMC), Paris). Then he pursued a PhD in Cognitive Neuroscience at UPMC and Collège de France between 2003 and 2007. He serves as co-director of studies for the CogMaster program at Ecole Normale Supérieure (PSL) / EHESS / University of Paris Cité. He is editor-in-chief for Intellectica and serves as editor for several other journals, incl. Frontiers in Neurorobotics, Frontiers in Decision Neuroscience, ReScience X, and Neurons, Behavior, Data analysis and Theory. His main topics of research include decision-making and reinforcement learning in robots and humans, the role of social and non-social rewards in learning, and ethical questions raised by machine autonomous decisionmaking. His main methods are computational modeling, design of new neuroscience experiments to test model predictions, analysis of experimental data, design of AI algorithms for robots, and behavioral experimentation with humans, non-human animals and robots.

Papers relevant for the talk

1. Rémi Dromnelle, Erwan Renaudo, Mohamed Chetouani, Petros Maragos, Raja Chatila, et al.. Reducing computational cost during robot navigation and human-robot interaction with a human-inspired reinforcement learning architecture. International Journal of Social Robotics, 2022. Available: https://doi.org/10.1007/s12369-022-00942-6

2. Abolfazl Zaraki, Mehdi Khamassi, Luke J Wood, Gabriella Lakatos, Costas S Tzafestas, et al.. A Novel Reinforcement-Based Paradigm for Children to Teach the Humanoid Kaspar Robot. International Journal of Social Robotics, 2020, 12 (3), pp.709-720. Available: https://doi.org/10.1007/s12369-019-00607-x

3. Mehdi Khamassi, George Velentzas, Theodore Tsitsimis, Costas Tzafestas. Robot Fast Adaptation to Changes in Human Engagement During Simulated Dynamic Social Interaction With Active Exploration in Parameterized Reinforcement Learning. IEEE Transactions on Cognitive and Developmental Systems, 2018, 10 (4), pp.881-893. Available: https://doi.org/10.1109/TCDS.2018.2843122

The lecture will be held in English and is aimed at students and staff of the H-BRS. Interested parties are cordially invited.

Contact

Location

Sankt Augustin

Room

C 216

Address

Grantham-Allee 20

53757 Sankt Augustin

Telephone

+49 2241 865 9608